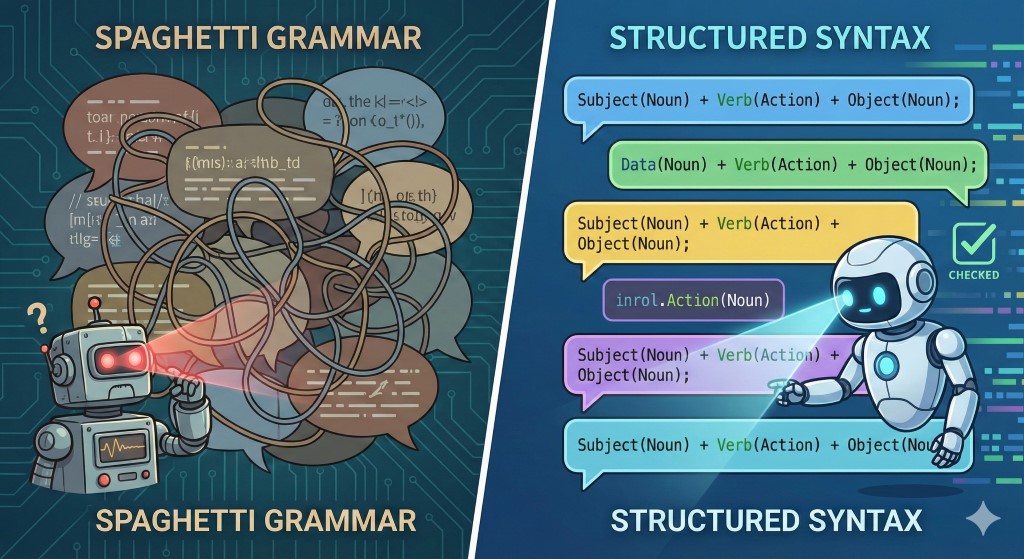

Linguistic Technical Debt is the accumulation of "fossilized errors"—grammatical mistakes you make repeatedly because they were "good enough" to be understood during your early learning phase. Just like quick-and-dirty code lets you ship an MVP faster but creates a fragile codebase that blocks future scalability, relying on "Me Tarzan, You Jane" grammar creates a hard ceiling that stops you from reaching C1 fluency. To break through the intermediate plateau, you need to stop acquiring new features (vocabulary) and start refactoring your existing codebase.

The Architecture of Failure: How "Good Enough" Becomes a Bug

In software engineering, we often make tradeoffs. We hardcode a variable or skip a unit test to meet a deadline. We call this Technical Debt. We promise to fix it later, but often, we don't.

In language learning, the same process happens. When you first moved to Berlin, London, or Warsaw, your goal was survival. You prioritized Meaning over Form.

If you said "I go store yesterday," the clerk understood you.

Result: The transaction succeeded.

Brain's Log: "Success! This syntax works. Save to production."

This is where the bug becomes a feature. Because the communication was successful, your brain fossilized the incorrect grammar. Now, years later, even though you know the rule for Past Simple, your brain executes the cached, incorrect version (I go) before you can access the correct library.

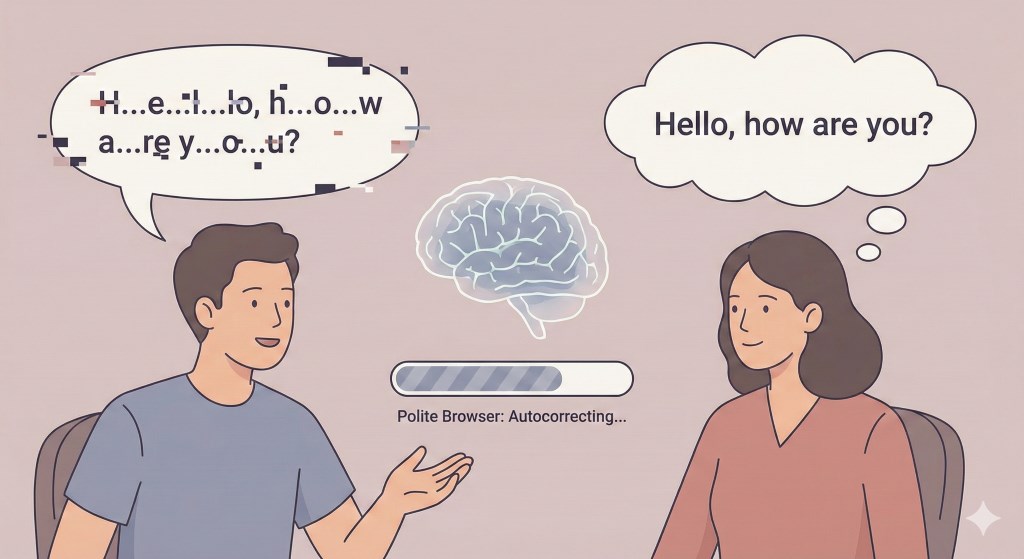

Why don't native speakers correct me? (The "Forgiving Browser" Problem)

You might wonder: "If my grammar is so bad, why hasn't anyone told me?"

The answer lies in how humans process input. Native speakers act like modern web browsers. If you write broken HTML (missing closing tags, bad nesting), Chrome doesn't crash; it guesses what you meant and renders the page anyway. Humans do the same. They are "polite browsers."

You say: "The chair is near of the bed."

They hear: "The chair is near the bed."

Research by Shehadeh (2003) found that over one-third of learner errors go completely unchallenged in natural conversation. By being polite, your conversation partners are inadvertently compounding your technical debt. They are marking your broken code as "Verified."

The Refactoring Workflow: A 3-Step Protocol

You cannot fix fossilized errors by "just speaking more." That is simply running the buggy code more often. You need a dedicated Refactoring Sprint. Here is a manual protocol to debug your speech using the "Audit, Isolate, Patch" method.

Step 1: The Audit (Logging)

You cannot refactor code you haven't read. Since your brain auto-corrects your own voice while speaking (a latency issue in the phonological loop), you need external logs.

Action: Record yourself speaking freely for 2 minutes about your day.

Review: Listen to the recording. Do not listen for meaning. Listen for syntax.

The Output: Write down every error you hear. This is your Bug Backlog.

Step 2: Isolate the Bug (Scope Management)

A common failure mode is trying to fix everything at once. This leads to cognitive overload (Stack Overflow).

Action: Pick ONE recurring error from your backlog (e.g., "confusing he and she" or "forgetting the third-person 's'").

The Rule: Ignore all other errors for this sprint. Focus exclusively on patching this single function.

Step 3: Unit Testing (Drills)

In code, a Unit Test verifies that a specific function behaves as expected under various inputs. You need to build Unit Tests for your grammar.

Action: Create "forced output" drills.

Example: If your bug is Past Tense, write 10 sentences about yesterday. Read them aloud. Then, try to generate 10 new sentences about yesterday spontaneously.

Pass Criteria: Can you produce the structure correctly 10 times in a row without hesitating? If not, the refactor failed. Rollback and repeat.

Can I automate the Code Review? (The "Linter" Solution)

The manual workflow above works, but it is tedious. It requires you to be your own QA engineer. In a real dev environment, we don't check for syntax errors manually; we use a Linter or a Compiler.

This is why we built DialogoVivo. We wanted to automate the "Audit" and "Unit Test" phases of language learning.

We designed our Validation Agent to act as a strict Compiler for your spoken language. Unlike a polite human (who ignores the bug), the Validation Agent throws an error exception in real-time.

- The Linter: When you speak in a DialogoVivo scenario, the AI analyzes your syntax. If you say "I go store," it pauses the simulation.

- The Error Log: It highlights the specific diff: Expected "went", found "go".

- The Documentation: It explains why the error occurred in your native language, instantly patching your knowledge gap.

By treating conversation practice as a simulation rather than a social interaction, you create a safe environment to pay down your technical debt. You can crash the program in the simulator 50 times so that when you deploy to production (real life), your code runs clean.

Ready to refactor your speech?

Stop deploying buggy code. Download DialogoVivo on Android and run your first diagnostic simulation today.

Reference: Shehadeh, A. (2003). Learner output, hypothesis testing, and internalizing linguistic knowledge.